Digit recognition is a procedure adopted by machines to recognize handwritten digits. In the real-world online recognition of digits is done by machine to recognize bank cheque amounts, evaluating numbers filled up hands-on various documents like tax forms, and so on.

A difficulty in the case of handwriting digits is that the style, size, width, and orientation of every digit is different every time, which differs from person to person. Thus it is a hard task for machines to recognize handwritten digits perfectly every time. However, the recent progress in machine learning makes it easier for machines to recognize handwritten digits of various sizes, width, and orientation.

In this article, we will try to develop a handwritten digit recognization App using the MNIST dataset and Pytorch framework. Basically we will be designing a Neural network in the Pytorch framework using the MNIST dataset and will finally compile it in deepC, to finally get our desired output.

The MNIST Dataset

The MNIST (Modified National Institute of Standards and Technology) dataset contains 60,000 images in the training set and 10,000 images in the testing dataset, both images having 10 digits ranging from 0–9. The handwritten digits are placed in 28*28 matrix in the form of images, where each cell contains greyscale pixel values.

Download Dataset

Divide Dataset

Inspect Dataset

Designing a NN (Neural network) Model

The NN model consists of one input layer, two fully connected hidden layers, and one output layer. The first hidden layer can take 784 values from the input layer but passes 100 values out of it (where batch_size =100), whereas the second hidden layer takes 100 input values from the first hidden layer and passes 10 numeric values(0–9) which are finally stored in the output layer.

The softmax activation function brings out the max probable value which is stored in the actual output and displays it.

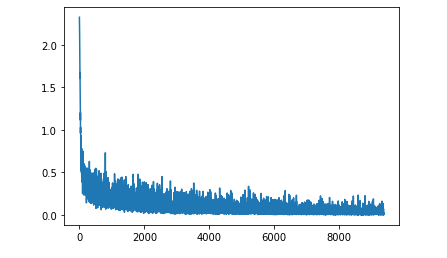

- The loss function is used to find the difference between the desired output and the obtained output. The Neutral network is trained for 10 epochs, and finally, we obtain 97.7100% Test Accuracy!

Compiling with deepC:

To bring the saved model on MCU, install deepC — an open-source, vendor-independent deep learning library cum compiler and inference framework, for microcomputers and micro-controllers.

Here’s the link to the complete notebook:https://cainvas.ai-tech.systems/use-cases/pytorch-mnist-vision-app/

Written by: Sanlap Dutta

Also Read: Predictive Maintainance using LSTM -Application based on IIoT