Human beings do have a lot of emotions and we as humans are able to distinguish between all of them. What if I tell you that we can expect some sort of same results from an ‘emotion-less machine.

KERAS

In this article, we will be talking about the use of the deep learning model in classifying two different emotions at a time. However, this thing can any day be extended to multi-class classification.

In this project of mine, I have worked on Keras, and I have handpicked some images to make the dataset from scratch, feel free to use a pre-defined dataset of your choice.

PREPROCESSING

For the very initial steps, let’s just import the necessary libraries and the dataset. Next, we are using images from tensorflow.keras.preprocessing to load and play around with images.

For the next steps, we are just defining variables to store the path of the directories we will be mainly be working on. We are also checking for the input shape of an image.

Now, we are augmenting our data for better results. Don’t forget to check for the class_indices and classes.

MODEL

Moving onto our model, I have used: loss = “binary_crossentropy”,optimizer =’rmsprop’,metrics = [‘accuracy’]

I found this video very beneficial for basic understanding. You can have a look for a better understanding of loss functions.

Finally, I have trained my model keeping epochs value as 50. You can really, play around with this for better accuracy or even less loss.

The structure of our sequential model would be somewhat like this:

If your model takes a lot of time in training you can save the once trained model for future use using load_model from tensorflow.keras.models. Although, it is a good practice to save your trained model like this.

PREDICTION

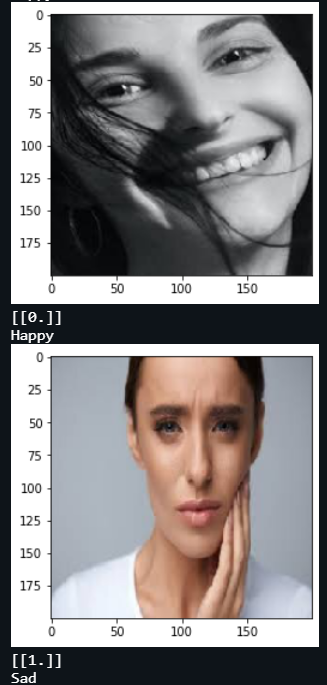

Finally, for prediction purposes I have provided 2 sets of code:

- First one could be used for individual facial emotion recognition from a single image.

- Second one could be used for checking multiple images.

You can refer to the code for more clarity in case of confusion.

Here are some results:

Platform: cAInvas

Code: Here

Author: Bhavyaa Sharma

Also Read: Song Genre Prediction