Object Detection can be defined as the task of object classification and localization.

Object Detection plays an important role in many Deep Learning projects and AIoT Applications such as we need object detection for Autonomous Vehicles, we need it for security camera surveillance, and even for applications used by us for generating PDFs nowadays uses Object Detection for detecting documents. In short, Object Detection has a wide range of applications.

Table of Content

- Introduction to cAInvas

- Importing Data and Necessary Files

- Load The Pre-Trained Model

- Testing The Model

- Introduction to DeepC

- Compilation with DeepC

Introduction to cAInvas

cAInvas is an integrated development platform to create intelligent edge devices. Not only we can train our deep learning model using Tensorflow, Keras, or Pytorch, we can also compile our model with its edge compiler called DeepC to deploy our working model on edge devices for production.

The Object Detection model is also a part of cAInvas gallery and all the dependencies which you will be needing for this project are also pre-installed.

cAInvas also offers various other deep learning notebooks in its gallery which one can use for reference or to gain insight about various deep learning projects. It also has GPU support and which makes it the best in its kind.

Importing Data and Necessary Files

When it comes to multi-class object detection mostly everyone relies on pre-trained models such as R-CNN or Yolo. But their implementation and managing all their configuration files, model weights, etc can be a time-consuming task.

But since this project is already a part of cAInvas Gallery we can easily import everything in our workspace by executing some commands.

Executing the above commands will download the following to our workspace:

yolov3.weights test_images3.jpg test_images2.jpg cfg/yolov3.cfg coco.names yolov3_model.h5 test_image.jpg

These are all the files which we will be needing for our multi-class classification problem.

Load The Pre-Trained Model

Once we are done with downloading the necessary files we will load the pre-trained model. Since Yolov3 model has been trained on the MS COCO dataset for thousands of epochs and hours of training, so there is no need for explicit training rather we can just use the pre-trained model and its weights provided by Yolo.

The next step is to load the Yolov3 weights and configuration file so that it can perform the multi-class object detection. We can do this by executing the following commands:

Testing The Model

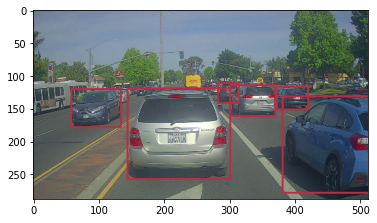

Once we have loaded all the configuration files and the model and its weights. Now we just have to test our model with test images that we downloaded earlier. The model performed well recognizing most of the objects in the image and also drew a bounding box around the objects.

Introduction to DeepC

DeepC Compiler and inference framework is designed to enable and perform deep learning neural networks by focusing on features of small form-factor devices like micro-controllers, eFPGAs, cpus, and other embedded devices like raspberry-pi, odroid, arduino, SparkFun Edge, risc-V, mobile phones, x86 and arm laptops among others.

DeepC also offers ahead of time compiler producing optimized executable based on LLVM compiler tool chain specialized for deep neural networks with ONNX as front end.

Compilation with DeepC

Now we will compile the yolov3_model.H5 file with DeepC compiler which comes as a part of cAInvas platform so that it converts our saved model to a format which can be easily deployed to edge devices. And all this can be done very easily using a simple command.

And that’s it, our Object Detection is ready to be deployed on edge devices.

Link for the cAInvas Notebook: https://cainvas.ai-tech.systems/use-cases/object-detection-app-using-yolo-v3/

Credit: Ashish Arya

You may also be interested in

- Learning more about Parkinson’s Disease Detection using Spiral Drawings and CNN

- Reading about Resume Screening using Deep Learning on Cainvas

- Also Read: Gesture recognition using TinyML devices — home automation applications

- Finding out about Neural network, the brain-inspired algorithms revolutionizing how machines see and understand the world around us

Become a Contributor: Write for AITS Publication Today! We’ll be happy to publish your latest article on data science, artificial intelligence, machine learning, deep learning, and other technology topics