Grapevines, indeed have a spectacular fall colour season. The colours of these leaves change because of several reasons such as a change in length of daylight and change in temperature, the leaves stop their food-making process. As the chlorophyll breaks down, the green colour disappears and yellow-red colours become visible and give the leaves the part of their fall splendor.

Enough talk about the science concerning the colour variations of a grape leaf. Our goal is to prepare a deep learning neural network model in order to determine the colour of these leaves by recognizing the images. In order to do that, we first need to get our hands on the dataset. For this, you can access this link.

After selecting our dataset, the next task is to go to such a platform that lets us train and prepare DNN models which can be deployed in web apps or IOT devices. For this purpose, we use Cainvas AITS Platform. This platform gives us access to train our models in jupyter notebooks using highly efficient GPUs.

We will start by importing all the libraries necessary for our training. The following libraries cover our dependencies.

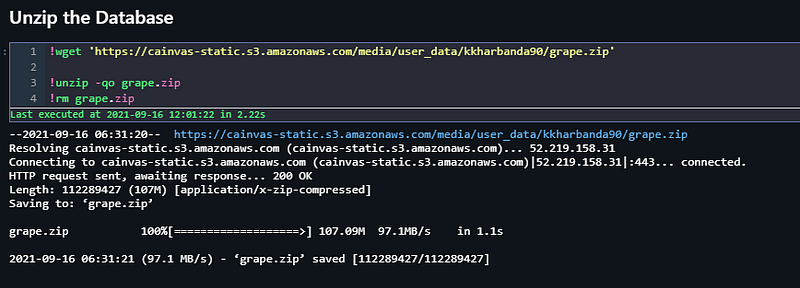

Next, we unzip our data to make it available in our workspace. After this, we define variables ‘train_path’ and ‘validation_path’ to define our training and validation splits of the dataset.

A very important step that needs to be taken before we start preparing our data is to visualize and view our data. For this part, we define several plotting functions and pass them to our image directories. Using cv2 library, the images and read and displayed with the help of matplotlib function.

Since our images are less in number, we define training and validation image data generators to expand our dataset of training and validation images. This is done by artificially generating new images by processing the existing images. By random flipping, rotating, scaling, and performing similar operations, we can create new images.

This creation is done with the help of ImageDataGenerator() function from the keras library. This preprocessing function is extremely helpful in dealing with image data. Next, we load our training and validation data through these image data generators and set an image input size of 256*256.

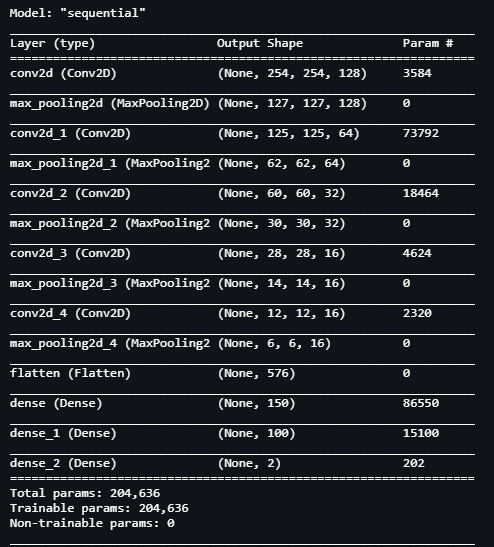

Now that we have our images which have been pre-processed and are ready to be read by our Neural Network Model, we define the architecture for it. We define a Sequential model with the following layers having about 204k trainable parameters.

Since our model architecture is ready, we fit the training and validation images into the model with the help of fit_generator() function. Defining steps per epoch as 15, validation steps as 1 and setting the number of epochs as 50, we begin training.

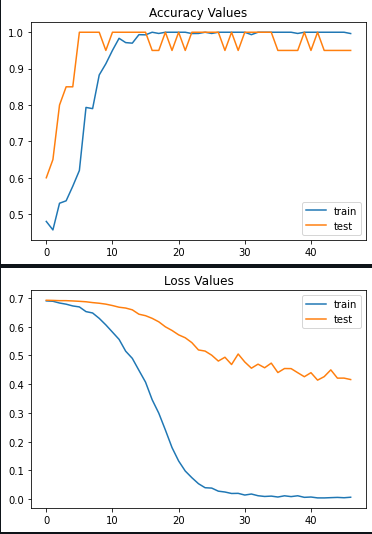

After our model is trained, we see that we’ve achieved the following statistics:

1. Training Accuracy: 100%

2. Validation Accuracy: 100%

3. Training Loss: 0.0

4. Validation Loss: 0.4

We confirm the above metrics by plotting accuracy and loss for both training loss with respect to number of epochs.

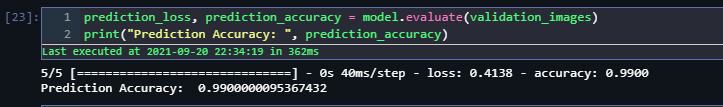

We will use other techniques and metrics as well to determine the performance of our model. Evaluating accuracy on the validation images for our model, we see that it achieves 99% accuracy. These results seem promising. For our final check, let us randomly select an image from each category of images and predict it using the model.

We observe that our model predicts both the images correct. Now we can indeed say 100% certainty that our model is highly efficient in categorizing images. We can deploy this Machine Learning Model in several IoT Devices to determine the colour without actual human intervention.

Machine Learning and Deep Learning have had great impacts in our life. The scope of improvement and growth in this field is beyond any limits. We’ve now reached the end of our project. If you want to access the full notebook, please follow this link.

Best of luck for your Machine Learning careers.

Cheers!

Credits: Kkharbanda

Also Read: Detecting Car Damage Using Deep Learning