Often while working with pdfs and docs the most common problem faced by all of us are that several pages are not clearly visible or due to any background the texts are not clearly visible. If the Document Denoising deep learning model is coupled with our camera or any pdf capturing application it can prove to be very useful.

Table of Content

- Introduction to cAInvas

- Importing the Dataset

- Data Loader

- Model Training

- Introduction to DeepC

- Compilation with DeepC

Introduction to cAInvas

cAInvas is an integrated development platform to create intelligent edge devices. Not only we can train our deep learning model using Tensorflow, Keras or Pytorch, we can also compile our model with its edge compiler called DeepC to deploy our working model on edge devices for production.

The Document Denoising project is also a part of cAInvas gallery. All the dependencies which you will be needing for this project are also pre-installed.

cAInvas also offers various other deep learning notebooks in its gallery which one can use for reference or to gain insight about deep learning. It also has GPU support and which makes it the best in its kind.

Importing the Dataset

While working on cAInvas one of its key features is UseCases Gallary. When working on any of its UseCases you don’t have to look for data manually. As they have the feature to import the dataset to your workspace when you work on them. To load the data we just have to enter the following commands:

Running the above command will load the labelled data in your workspace which you will use for model training.

Data Loader

To load the data we will use the glob module of python to import all the train files and test files in our workspace. For the trainset and testset creation we will create two separate two dimensional vector that contains all the greyscale images of noisy images and cleaned images.

Model Training

After creating the dataset next step is to pass our training data into our Deep Learning model to learn to learn to produce the cleaned images from the noisy images. The model architecture used was:

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv2d (Conv2D) (None, 30, 30, 64) 640 _________________________________________________________________ max_pooling2d (MaxPooling2D) (None, 15, 15, 64) 0 _________________________________________________________________ conv2d_1 (Conv2D) (None, 15, 15, 32) 18464 _________________________________________________________________ max_pooling2d_1 (MaxPooling2 (None, 8, 8, 32) 0 _________________________________________________________________ conv2d_2 (Conv2D) (None, 8, 8, 32) 9248 _________________________________________________________________ up_sampling2d (UpSampling2D) (None, 16, 16, 32) 0 _________________________________________________________________ conv2d_3 (Conv2D) (None, 16, 16, 64) 18496 _________________________________________________________________ up_sampling2d_1 (UpSampling2 (None, 32, 32, 64) 0 _________________________________________________________________ conv2d_4 (Conv2D) (None, 32, 32, 1) 577 ================================================================= Total params: 47,425 Trainable params: 47,425 Non-trainable params: 0 _________________________________________________________________

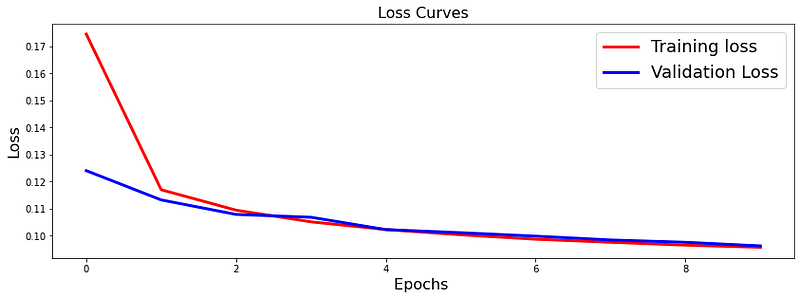

The loss function used was “binary_crossentropy” and optimizer used was “Adam”.For training the model we used Keras API with tensorflow at backend. .Here are the training plot for the model:

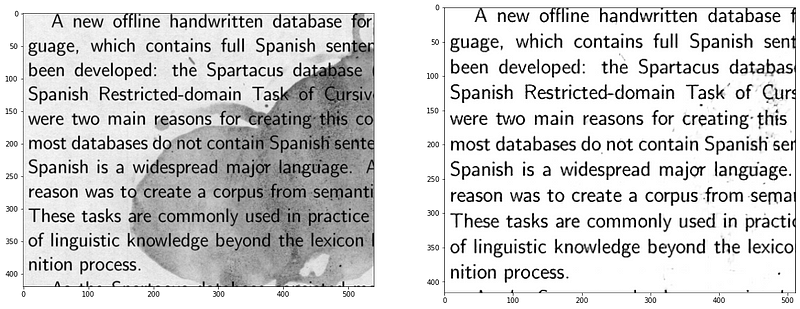

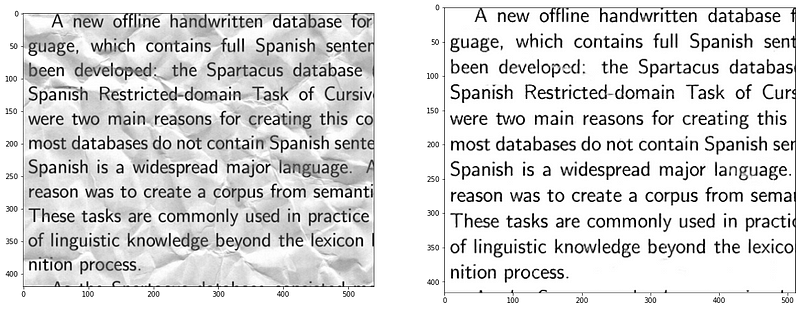

Here are some of the results of the cleaned up images from the noisy images.

Introduction to DeepC

DeepC Compiler and inference framework is designed to enable and perform deep learning neural networks by focussing on features of small form-factor devices like micro-controllers, eFPGAs, cpus and other embedded devices like raspberry-pi, odroid, arduino, SparkFun Edge, risc-V, mobile phones, x86 and arm laptops among others.

DeepC also offers ahead of time compiler producing optimized executable based on LLVM compiler tool chain specialized for deep neural networks with ONNX as front end.

Compilation with DeepC

After training the model, it was saved in an H5 format using Keras as it easily stores the weights and model configuration in a single file.

After saving the file in H5 format we can easily compile our model using DeepC compiler which comes as a part of cAInvas platform so that it converts our saved model to a format which can be easily deployed to edge devices. And all this can be done very easily using a simple command.

And that’s it, our Document Denoising Model is trained and ready for deployment on edge devices.

Link for the cAInvas Notebook: https://cainvas.ai-tech.systems/use-cases/document-denoising-app/

Credit: Ashish Arya

Also Read: Visual Wake word detection — on cAInvas